Why banks fail at contact center AI—and what the winners do differently

Banks have poured billions into AI for their contact centers. Most are getting almost nothing back.

McKinsey recently published research on what they're calling the "rewired gap"—the growing divide between banks that have genuinely restructured their operations around AI and those that have simply bolted AI onto existing, broken processes. The gap is widening fast, and most banks are on the wrong side of it.

The core insight is uncomfortable: banks aren't failing because their AI tools are bad. They're failing because they're layering AI on top of broken processes, automating symptoms instead of fixing root causes, and measuring the wrong things entirely.

Here's why value stalls—and what the banks actually winning are doing differently.

Why AI fails in banking contact centers

The contact center is the obvious first stop for AI in banking. High call volumes. Dense data. Recorded transcripts, structured logs, real-time feedback. Few environments are more measurable on paper.

So the pitch is irresistible: voice bots, agent copilots, real-time sentiment analysis—all promising 30-45% cost reductions and meaningfully better customer experience.

And yet the anticipated gains aren't materializing. Pilots stall. Vendors get blamed. A new vendor gets hired. The cycle repeats.

The tools aren't the problem. The operating model is.

The three root causes of AI failure in banks

1. The process gap: you're automating the wrong thing

When a bank sees that 40% of calls are about "balance issues," the instinct is to build a bot for balance issues.

But nobody calls just to check a number. They call because:

- A deposit didn't show up

- A transaction looks wrong

- Their account doesn't make sense

The category looks simple. The root cause is complex. The bot handles the easy shell. The agent still handles everything that actually matters. You've automated the label, not the problem.

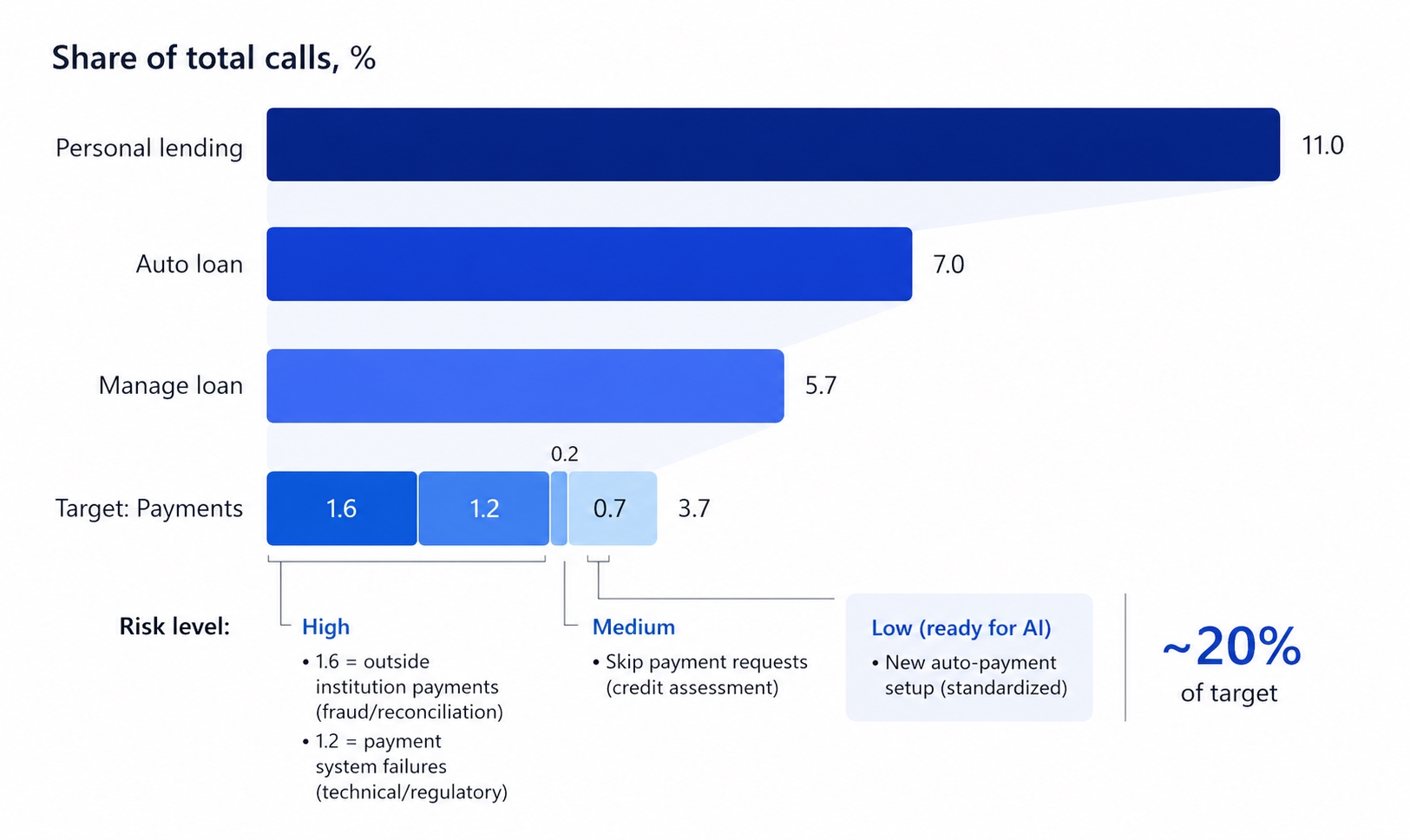

One bank thought it could automate payment calls within its auto loan category—roughly 3.7% of its total call volume. Seemed like a clean, well-defined category. After deeper analysis, only ~20% of those calls were actually automatable: straightforward new auto-payment setups with a standardized flow. The remaining 80% broke down into calls that AI simply couldn't handle safely—1.6% involved outside-institution payments flagged for fraud or reconciliation, 1.2% were payment system failures requiring technical or regulatory resolution, and 0.7% were skip-payment requests that needed a credit assessment before any action could be taken. Pushing AI onto those calls—without first redesigning the underlying processes—would have created more problems than it solved.

This is the pattern everywhere: AI amplifies what's already there. If the process is broken, you get faster broken.

A login bot that doesn't fix the multifactor authentication (MFA) failure behind login issues just routes the same frustrated customer through a worse experience before they reach the same human agent they were always going to need.

2. The operating model gap: two scorecards, zero alignment

There's a persistent disconnect between the innovation lab and the C-suite—and it shows up in how success gets measured.

Pilots get evaluated on technical metrics: containment rates, intent identification accuracy, deflection percentages. Boardrooms care about financial metrics: cost per contact, customer satisfaction scores (CSAT), net promoter scores (NPS).

These aren't the same thing. And when they diverge, the project quietly fails while everyone is still calling it a success.

A bot might achieve a 90% containment rate on dispute calls—impressive on a dashboard. But if those customers call back three days later because the root cause was never addressed, the total cost per contact goes up, not down. The metric looked good. The outcome was worse.

The surge-and-retreat pattern is where this misalignment becomes catastrophic. Banks that prioritize rapid agent replacement over thoughtful augmentation cut headcount aggressively, watch service quality collapse, and then spend the next six months quietly rehiring. The AI initiative gets blamed. The real culprit was the incentive structure that surrounded it.

3. The governance gap: risk treated as a roadblock instead of a design partner

In banking, AI doesn't operate in a vacuum. Fair lending requirements, explainability standards, data residency rules—these aren't optional. They are the environment.

The problem is that most AI projects get built in isolation and presented to risk and compliance teams only at the point of scaling up. By then, it's not a review. It's a negotiation over what to cut.

Concerns about hallucination, regulatory exposure, and model explainability turn potential breakthroughs into permanent pilots. The project never dies officially—it just never ships.

The banks that are winning treat risk and compliance as integrated design constraints from day one, not a final gate to be cleared at the end.

What actually works: four strategies from high-performing institutions

1. Use AI to eliminate demand, not just handle it

The counterintuitive move: use AI to understand why customers are calling, then fix the upstream problem so they don't call at all.

One global fintech analyzed dispute transcripts and found that 50% of calls traced back to a single root cause—customers filing new disputes for unauthorized transactions. Fix that flow, redesign that digital journey, and you eliminate half the contact volume before it ever reaches the center. No bot required. No containment rate to optimize. Just fewer calls.

A credit union found a similar pattern: a significant portion of digital support calls were simple MFA failures during login. The fix wasn't a smarter bot. It was fixing the login experience.

Demand reduction is more valuable than demand management. The best contact center interaction is the one that never happens.

2. Augment before you replace

A financial services firm with $1 billion in annual call center spend and 20,000 agents didn't start by cutting headcount. They used AI to optimize workforce management—identifying intraday staffing gaps, automating schedule adjustments, and freeing up latent capacity.

Result: 5-10% freed capacity and a 10-15% reduction in costs. No layoffs. No service quality collapse. No surge-and-retreat.

The lesson isn't that replacement is wrong. It's that augmentation builds the trust, data, and process understanding needed to make replacement work when it happens.

3. Redesign the workflow, don't just add a new interface

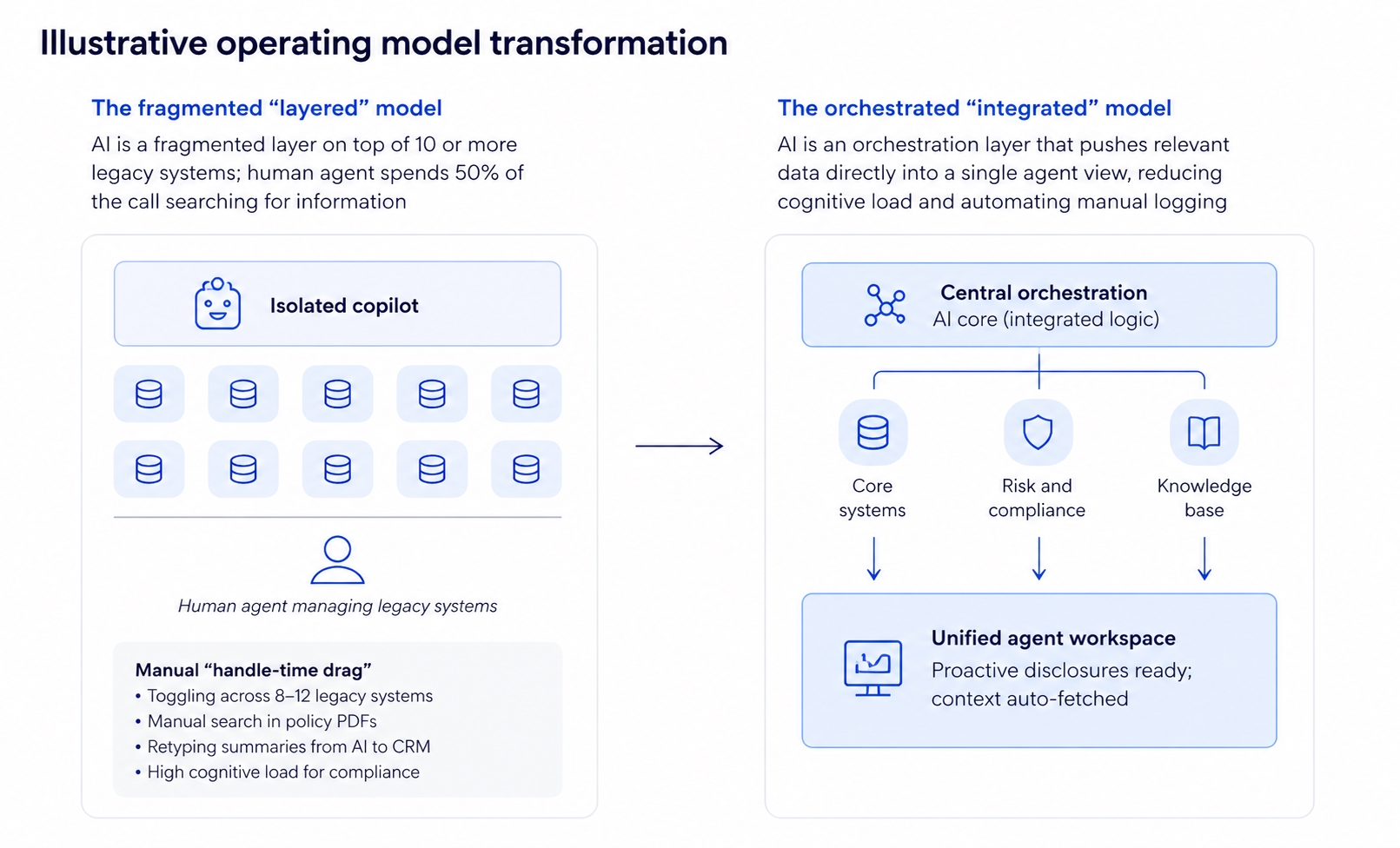

The most common AI implementation mistake is treating AI as a research tool sitting beside an existing workflow. An agent who still spends 70% of their time toggling between ten legacy systems gets minimal benefit from a chatbot they have to consult separately.

The highest-performing implementations integrate AI at the actual decision points—navigating knowledge bases in real time, flagging fraud risk thresholds before an agent notices them, drafting compliant disclosures automatically. The AI reduces cognitive load at the moment of need, not in a separate tab.

4. Fine-tune for banking specifically

Generic AI doesn't understand chargeback life cycles. It doesn't know ACH return codes. It can't navigate regulatory disclosure requirements or recognize fair-lending red flags.

High-performing models are trained on the bank's own transcripts, taxonomies, and policies. If the AI isn't tuned to the specific institution, accuracy stays low and agent trust evaporates fast. Once agents stop trusting the tool, adoption collapses—regardless of how good the underlying model is.

The results when AI is implemented correctly

When AI is embedded into a redesigned operating model—not just dropped on top of the existing one—here's what the outcomes look like:

| Metric | Improvement |

|---|---|

| Reduction in call volume | 25-40% |

| Drop in average handling time | 10-20% |

| Improvement in first-call resolution | 15-25% |

| Increase in customer satisfaction (CSAT) | 10-15 points |

| Reduction in QA costs | 20-30% |

These aren't vendor pitch numbers. They're from institutions that rewired the process first and deployed the technology second.

Key takeaways

- The problem isn't the AI. Most banking AI failures trace back to broken processes that AI simply accelerates.

- Align your scorecards. Technical metrics (containment rate, deflection %) need to connect directly to financial outcomes (cost per contact, CSAT, NPS) or pilots will quietly fail.

- Bring compliance in early. Banks that treat risk as a design partner—not a final gate—ship faster and scale more safely.

- Demand elimination beats demand management. The highest-ROI AI move is fixing the root causes that generate call volume in the first place.

- Augment first, then replace. Cutting headcount before AI is trusted and embedded leads to the surge-and-retreat pattern that sets programs back by years.

The strategic shift

AI implementation in banking isn't a chatbot initiative. It isn't a vendor deployment. It isn't an innovation-lab experiment.

It's a fundamental operating model transformation—and it requires the kind of leadership commitment that most organizations find genuinely difficult.

Banks that treat AI as a tactical automation tool will keep seeing incremental gains and stalled pilots. Institutions that approach it as a demand intelligence engine, a decision-support layer, and a catalyst for process redesign will capture its full economic value.

The gap between rewired leaders and legacy-bound laggards is already widening. And the boardroom reckoning is shifting from a question of technology to a question of competitive survival.

Success won't be defined by which banks have the most advanced AI. It'll be defined by which ones had the courage to dismantle the legacy structures that were preventing AI from delivering value in the first place.

Buying better AI is easy. Dismantling a legacy operating model is not. That's the actual competition now.

Frequently asked questions

Why is AI failing in bank contact centers? Most banks are layering AI onto broken processes rather than redesigning those processes first. AI amplifies what's already there—so broken workflows become faster and more expensive broken workflows. The failure is rarely about the technology; it's about the operating model surrounding it.

What is the "rewired gap" in banking AI? The rewired gap is the growing divide between banks that have genuinely restructured their operations around AI and those that have simply added AI tools on top of existing structures. Banks on the wrong side of this gap see minimal ROI from significant AI investment.

What's the biggest mistake banks make when implementing AI? Automating the label instead of solving the problem. When 40% of calls are categorized as "balance issues," banks build a bot for balance issues—without realizing that most of those calls are about unresolved upstream problems like missing deposits or suspicious transactions, which still require human resolution.

How should banks measure AI success in the contact center? Technical metrics like containment rate and deflection percentage need to connect directly to financial and experience outcomes: cost per contact, customer satisfaction scores (CSAT), net promoter scores (NPS), and first-call resolution rates. When these two scorecard tracks aren't aligned, projects appear successful internally while delivering no real business value.

What's the right order of operations for AI in a bank contact center? Start with demand analysis—use AI to understand why customers are calling and fix upstream issues that eliminate call volume entirely. Then augment human agents with AI tools before automating full interactions. Build compliance into the design process from day one. Only then replace interactions that are genuinely automatable end-to-end.

Based on McKinsey's April 2026 article: "The AI-powered bank: Rewiring for excellence in customer care."